Backtesting is a course of utilized in quantitative finance to guage buying and selling methods utilizing historic knowledge. This helps merchants decide the potential profitability of a technique and determine any dangers related to it, enabling them to optimize it for higher efficiency.

Index rebalancing arbitrage takes benefit of short-term worth discrepancies ensuing from ETF managers’ efforts to attenuate index monitoring error. Main market indexes, similar to S&P 500, are topic to periodic inclusions and exclusions for causes past the scope of this submit (for an instance, confer with CoStar Group, Invitation Houses Set to Be part of S&P 500; Others to Be part of S&P 100, S&P MidCap 400, and S&P SmallCap 600). The arbitrage commerce seems to be to revenue from going lengthy on shares added to an index and shorting those which can be eliminated, with the intention of producing revenue from these worth variations.

On this submit, we glance into the method of utilizing backtesting to guage the efficiency of an index arbitrage profitability technique. We particularly discover how Amazon EMR and the newly developed Apache Iceberg branching and tagging function can deal with the problem of look-ahead bias in backtesting. This may allow a extra correct analysis of the efficiency of the index arbitrage profitability technique.

Terminology

Let’s first focus on a number of the terminology used on this submit:

- Analysis knowledge lake on Amazon S3 – A knowledge lake is a big, centralized repository that means that you can handle all of your structured and unstructured knowledge at any scale. Amazon Easy Storage Service (Amazon S3) is a well-liked cloud-based object storage service that can be utilized as the inspiration for constructing an information lake.

- Apache Iceberg – Apache Iceberg is an open-source desk format that’s designed to offer environment friendly, scalable, and safe entry to giant datasets. It supplies options similar to ACID transactions on high of Amazon S3-based knowledge lakes, schema evolution, partition evolution, and knowledge versioning. With scalable metadata indexing, Apache Iceberg is ready to ship performant queries to quite a lot of engines similar to Spark and Athena by lowering planning time.

- Look–forward bias – This can be a frequent problem in backtesting, which happens when future info is inadvertently included in historic knowledge used to check a buying and selling technique, resulting in overly optimistic outcomes.

- Iceberg tags – The Iceberg branching and tagging function permits customers to tag particular snapshots of their knowledge tables with significant labels utilizing SQL syntax or the Iceberg library, which correspond to particular occasions notable to inner funding groups. This, mixed with Iceberg’s time journey performance, ensures that correct knowledge enters the analysis pipeline and guards it from hard-to-detect issues similar to look-ahead bias.

Testing scope

For our testing functions, take into account the next instance, by which a change to the S&P Dow Jones Indices is introduced on September 2, 2022, turns into efficient on September 19, 2022, and doesn’t turn out to be observable within the ETF holdings knowledge that we are going to be utilizing within the experiment till September 30, 2022. We use Iceberg tags to label market knowledge snapshots to keep away from look-ahead bias within the analysis knowledge lake, which can allow us to check varied commerce entry and exit eventualities and assess the respective profitability of every.

Experiment

As a part of our experiment, we make the most of a paid, third-party knowledge supplier API to determine SPY ETF holdings adjustments and assemble a portfolio. Our mannequin portfolio will purchase shares which can be added to the index, generally known as going lengthy, and can promote an equal quantity of shares faraway from the index, generally known as going brief.

We’ll take a look at short-term holding durations, similar to 1 day and 1, 2, 3, or 4 weeks, as a result of we assume that the rebalancing impact could be very short-lived and new info, similar to macroeconomics, will drive efficiency past the studied time horizons. Lastly, we simulate completely different entry factors for this commerce:

- Market open the day after announcement day (AD+1)

- Market shut of efficient date (ED0)

- Market open the day after ETF holdings registered the change (MD+1)

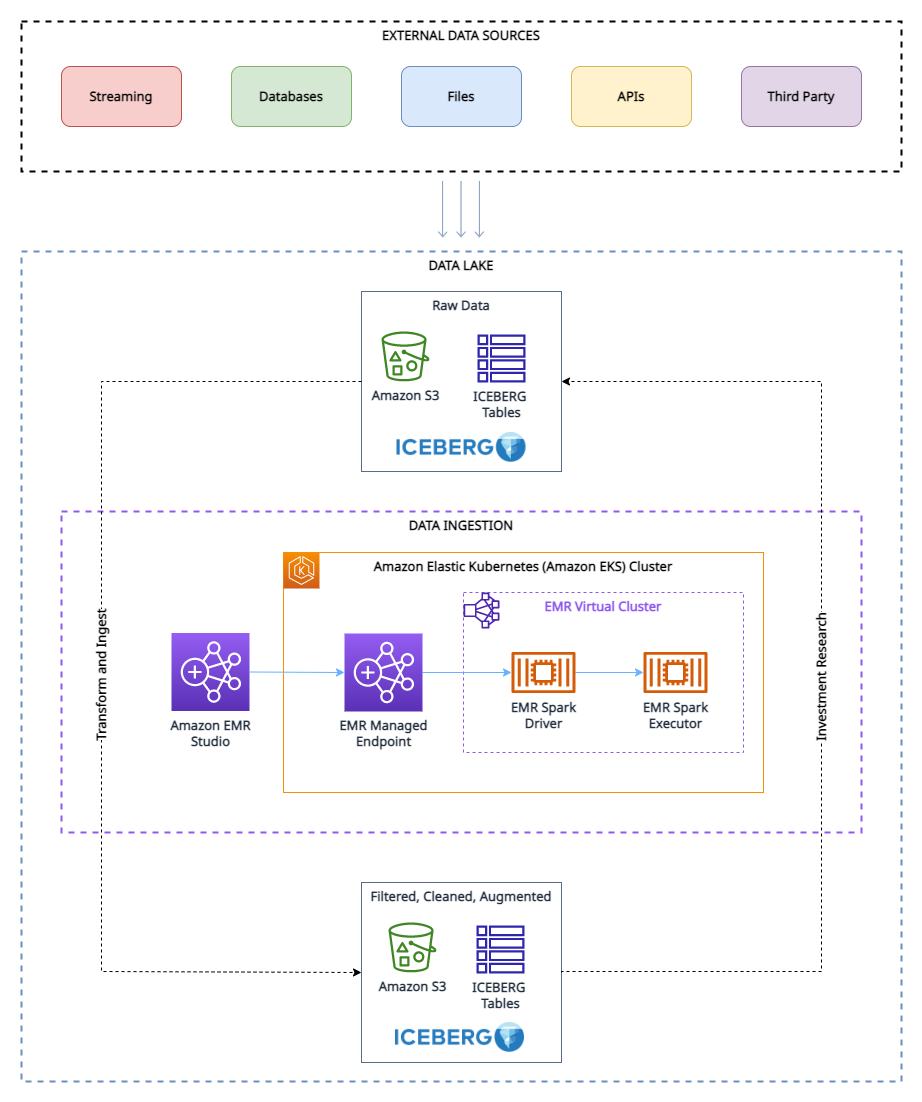

Analysis knowledge lake

To run our experiment, we’ve got have used the next analysis knowledge lake surroundings.

As proven within the structure diagram, the analysis knowledge lake is constructed on Amazon S3 and managed utilizing Apache Iceberg, which is an open desk format bringing the reliability and ease of relational database administration service (RDBMS) tables to knowledge lakes. To keep away from look-ahead bias in backtesting, it’s important to create snapshots of the info at completely different deadlines. Nonetheless, managing and organizing these snapshots may be difficult, particularly when coping with a big quantity of knowledge.

That is the place the tagging function in Apache Iceberg turns out to be useful. With tagging, researchers can create otherwise named snapshots of market knowledge and observe adjustments over time. For instance, they’ll create a snapshot of the info on the finish of every buying and selling day and tag it with the date and any related market situations.

By utilizing tags to arrange the snapshots, researchers can simply question and analyze the info based mostly on particular market situations or occasions, with out having to fret concerning the particular dates of the info. This may be significantly useful when conducting analysis that isn’t time-sensitive or when searching for traits over lengthy durations of time.

Moreover, the tagging function may also assist with different points of knowledge administration, similar to knowledge retention for GDPR compliance, and sustaining lineages of the desk by way of completely different branches. Researchers can use Apache Iceberg tagging to make sure the integrity and accuracy of their knowledge whereas additionally simplifying knowledge administration.

Conditions

To comply with together with this walkthrough, it’s essential to have the next:

- An AWS account with an IAM position that has ample entry to provision the required sources.

- To adjust to licensing concerns, we can’t present a pattern of the ETF constituents knowledge. Due to this fact, it should be bought individually for the dataset onboarding functions.

Answer overview

To arrange and take a look at this experiment, we full the next high-level steps:

- Create an S3 bucket.

- Load the dataset into Amazon S3. For this submit, the ETF knowledge referred to was obtained by way of API name by a third-party supplier, however you too can take into account the next choices:

- You need to use the next prescriptive steerage, which describes how one can automate knowledge ingestion from varied knowledge suppliers into an information lake in Amazon S3 utilizing AWS Knowledge Trade.

- You may as well make the most of AWS Knowledge Trade to pick out from a spread of third-party dataset suppliers. It simplifies the utilization of knowledge recordsdata, tables, and APIs to your particular wants.

- Lastly, you too can confer with the next submit on how one can use AWS Knowledge Trade for Amazon S3 to entry knowledge from a supplier bucket: Analyzing affect of regulatory reform on the inventory market utilizing AWS and Refinitiv knowledge.

- Create an EMR cluster. You need to use this Getting Began with EMR tutorial or we used CDK to deploy an EMR on EKS surroundings with a customized managed endpoint.

- Create an EMR pocket book utilizing EMR Studio. For our testing surroundings, we used a customized construct Docker picture, which comprises Iceberg v1.3. For directions on attaching a cluster to a Workspace, confer with Connect a cluster to a Workspace.

- Configure a Spark session. You possibly can comply with alongside by way of the next pattern pocket book.

- Create an Iceberg desk and cargo the take a look at knowledge from Amazon S3 into the desk.

- Tag this knowledge to protect a snapshot of it.

- Carry out updates to our take a look at knowledge and tag the up to date dataset.

- Run simulated backtesting on our take a look at knowledge to search out probably the most worthwhile entry level for a commerce.

Create the experiment surroundings

We are able to stand up and working with Iceberg by making a desk by way of Spark SQL from an current view, as proven within the following code:

Now that we’ve created an Iceberg desk, we will use it for funding analysis. One of many key options of Iceberg is its assist for scalable knowledge versioning. Which means that we will simply observe adjustments to our knowledge and roll again to earlier variations with out making further copies. As a result of this knowledge will get up to date periodically, we would like to have the ability to create named snapshots of the info in order that quant merchants have easy accessibility to constant snapshots of knowledge which have their very own retention coverage. On this case, let’s tag the dataset to point that it represents the ETF holdings knowledge as of Q1 2022:

As we transfer ahead in time and new knowledge turns into accessible by Q3, we might have to replace current datasets to mirror these adjustments. Within the following instance, we first use an UPDATE assertion to mark the shares as expired within the current ETF holdings dataset. Then we use the MERGE INTO assertion based mostly on matching situations similar to ISIN code. If a match is just not discovered between the present dataset and the brand new dataset, the brand new knowledge shall be inserted as new information within the desk and standing code shall be set to ‘new’ for these information. Equally, if the present dataset has shares that aren’t current within the new dataset, these information will stay expired with a standing code of ‘expired’. Lastly, for information the place a match is discovered, the info within the current dataset shall be up to date with the info from the brand new dataset, and document may have an unchanged standing code. With Iceberg’s assist for environment friendly knowledge versioning and transactional consistency, we may be assured that our knowledge updates shall be utilized accurately and with out knowledge corruption.

As a result of we now have a brand new model of the info, we use Iceberg tagging to offer isolation for every new model of knowledge. On this case, we tag this as Q3_2022 and permit quant merchants and different customers to work on this snapshot of the info with out being affected by ongoing updates to the pipeline:

This makes it very simple to see which shares are being added and deleted. We are able to use Iceberg’s time journey function to learn the info at a given quarterly tag. First, let’s have a look at which shares are added to the index; these are the rows which can be within the Q3 snapshot however not within the Q1 snapshot. Then we’ll have a look at which shares are eliminated; these are the rows which can be within the Q1 snapshot however not within the Q3 snapshot:

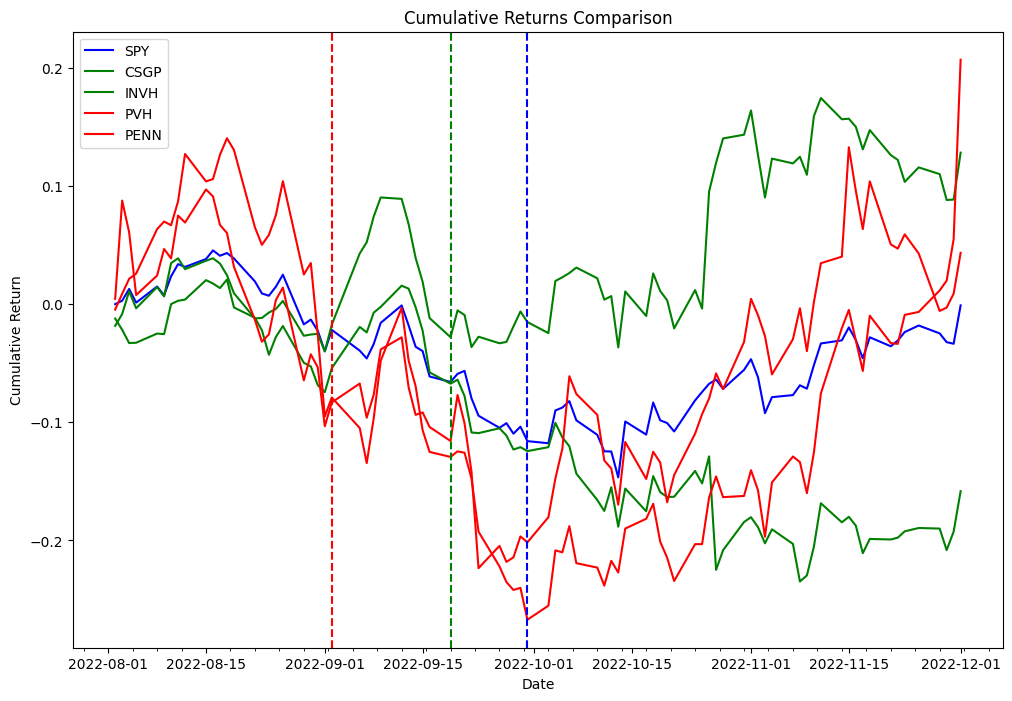

Now we use the delta obtained within the previous code to backtest the next technique. As a part of the index rebalancing arbitrage course of, we’re going to lengthy shares which can be added to the index and brief shares which can be faraway from the index, and we’ll take a look at this technique for each the efficient date and announcement date. As a proof of idea from the 2 completely different lists, we picked PVH and PENN as eliminated shares, and CSGP and INVH as added shares.

To comply with together with the examples beneath, you’ll need to make use of the pocket book offered within the Quant Analysis instance GitHub repository.

The next desk signify the portfolio orders information:

| Order Id | Column | Timestamp | Dimension | Value | Charges | Aspect |

|---|---|---|---|---|---|---|

| 0 | (PENN, PENN) | 2022-09-06 | 31948.881789 | 31.66 | 0.0 | Promote |

| 1 | (PVH, PVH) | 2022-09-06 | 18321.729571 | 55.15 | 0.0 | Promote |

| 2 | (INVH, INVH) | 2022-09-06 | 27419.797094 | 38.20 | 0.0 | Purchase |

| 3 | (CSGP, CSGP) | 2022-09-06 | 14106.361969 | 75.00 | 0.0 | Purchase |

| 4 | (CSGP, CSGP) | 2022-11-01 | 14106.361969 | 83.70 | 0.0 | Promote |

| 5 | (INVH, INVH) | 2022-11-01 | 27419.797094 | 31.94 | 0.0 | Promote |

| 6 | (PVH, PVH) | 2022-11-01 | 18321.729571 | 52.95 | 0.0 | Purchase |

| 7 | (PENN, PENN) | 2022-11-01 | 31948.881789 | 34.09 | 0.0 | Purchase |

Experimentation findings

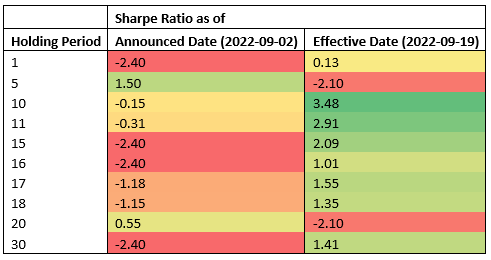

The next desk exhibits Sharpe Ratios for varied holding durations and two completely different commerce entry factors: announcement and efficient dates.

The info means that the efficient date is probably the most worthwhile entry level throughout most holding durations, whereas the announcement date is an efficient entry level for short-term holding durations (5 calendar days, 2 enterprise days). As a result of the outcomes are obtained from testing a single occasion, this isn’t statistically vital to just accept or reject a speculation that index rebalancing occasions can be utilized to generate constant alpha. The infrastructure we used for our testing can be utilized to run the identical experiment required to do speculation testing at scale, however index constituents knowledge is just not available.

Conclusion

On this submit, we demonstrated how the usage of backtesting and the Apache Iceberg tagging function can present useful insights into the efficiency of index arbitrage profitability methods. By utilizing a scalable Amazon EMR on Amazon EKS stack, researchers can simply deal with your complete funding analysis lifecycle, from knowledge assortment to backtesting. Moreover, the Iceberg tagging function can assist deal with the problem of look-ahead bias, whereas additionally offering advantages similar to knowledge retention management for GDPR compliance and sustaining lineage of the desk by way of completely different branches. The experiment findings display the effectiveness of this method in evaluating the efficiency of index arbitrage methods and might function a helpful information for researchers within the finance business.

Concerning the Authors

Boris Litvin is Principal Answer Architect, accountable for monetary providers business innovation. He’s a former Quant and FinTech founder, and is enthusiastic about systematic investing.

Boris Litvin is Principal Answer Architect, accountable for monetary providers business innovation. He’s a former Quant and FinTech founder, and is enthusiastic about systematic investing.

Man Bachar is a Options Architect at AWS, based mostly in New York. He accompanies greenfield clients and helps them get began on their cloud journey with AWS. He’s enthusiastic about identification, safety, and unified communications.

Man Bachar is a Options Architect at AWS, based mostly in New York. He accompanies greenfield clients and helps them get began on their cloud journey with AWS. He’s enthusiastic about identification, safety, and unified communications.

Noam Ouaknine is a Technical Account Supervisor at AWS, and relies in Florida. He helps enterprise clients develop and obtain their long-term technique by technical steerage and proactive planning.

Noam Ouaknine is a Technical Account Supervisor at AWS, and relies in Florida. He helps enterprise clients develop and obtain their long-term technique by technical steerage and proactive planning.

Sercan Karaoglu is Senior Options Architect, specialised in capital markets. He’s a former knowledge engineer and enthusiastic about quantitative funding analysis.

Sercan Karaoglu is Senior Options Architect, specialised in capital markets. He’s a former knowledge engineer and enthusiastic about quantitative funding analysis.

Jack Ye is a software program engineer within the Athena Knowledge Lake and Storage workforce. He’s an Apache Iceberg Committer and PMC member.

Jack Ye is a software program engineer within the Athena Knowledge Lake and Storage workforce. He’s an Apache Iceberg Committer and PMC member.

Amogh Jahagirdar is a Software program Engineer within the Athena Knowledge Lake workforce. He’s an Apache Iceberg Committer.

Amogh Jahagirdar is a Software program Engineer within the Athena Knowledge Lake workforce. He’s an Apache Iceberg Committer.