Specialization Made Obligatory

A hospital is overcrowded with consultants and docs every with their very own specializations, fixing distinctive issues. Surgeons, cardiologists, pediatricians—consultants of every kind be a part of fingers to supply care, usually collaborating to get the sufferers the care they want. We will do the identical with AI.

Combination of Consultants (MoE) structure in synthetic intelligence is outlined as a combination or mix of various “skilled” fashions working collectively to cope with or reply to advanced knowledge inputs. In the case of AI, each skilled in an MoE mannequin makes a speciality of a a lot bigger downside—similar to each physician specializes of their medical subject. This improves effectivity and will increase system efficacy and accuracy.

Mistral AI delivers open-source foundational LLMs that rival that of OpenAI. They’ve formally mentioned using an MoE structure of their Mixtral 8x7B mannequin, a revolutionary breakthrough within the type of a cutting-edge Giant Language Mannequin (LLM). We’ll deep dive into why Mixtral by Mistral AI stands out amongst different foundational LLMs and why present LLMs now make use of the MoE structure highlighting its pace, dimension, and accuracy.

Frequent Methods to Improve Giant Language Fashions (LLMs)

To raised perceive how the MoE structure enhances our LLMs, let’s focus on widespread strategies for enhancing LLM effectivity. AI practitioners and builders improve fashions by rising parameters, adjusting the structure, or fine-tuning.

- Growing Parameters: By feeding extra data and deciphering it, the mannequin’s capability to be taught and characterize advanced patterns will increase. Nevertheless, this could result in overfitting and hallucinations, necessitating in depth Reinforcement Studying from Human Suggestions (RLHF).

- Tweaking Structure: Introducing new layers or modules accommodates the rising parameter counts and improves efficiency on particular duties. Nevertheless, adjustments to the underlying structure are difficult to implement.

- High quality-tuning: Pre-trained fashions will be fine-tuned on particular knowledge or by way of switch studying, permitting current LLMs to deal with new duties or domains with out ranging from scratch. That is the best methodology and doesn’t require important adjustments to the mannequin.

What’s the MoE Structure?

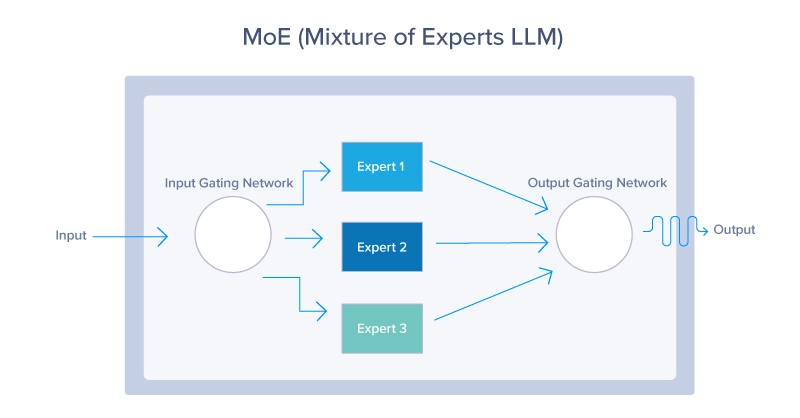

The Combination of Consultants (MoE) structure is a neural community design that improves effectivity and efficiency by dynamically activating a subset of specialised networks, referred to as consultants, for every enter. A gating community determines which consultants to activate, resulting in sparse activation and diminished computational price. MoE structure consists of two crucial parts: the gating community and the consultants. Let’s break that down:

At its coronary heart, the MoE structure capabilities like an environment friendly visitors system, directing every automobile – or on this case, knowledge – to the most effective route primarily based on real-time situations and the specified vacation spot. Every job is routed to probably the most appropriate skilled, or sub-model, specialised in dealing with that specific job. This dynamic routing ensures that probably the most succesful assets are employed for every job, enhancing the general effectivity and effectiveness of the mannequin. The MoE structure takes benefit of all 3 methods enhance a mannequin’s constancy.

- By implementing a number of consultants, MoE inherently will increase the mannequin’s

- parameter dimension by including extra parameters per skilled.

- MoE adjustments the traditional neural community structure which includes a gated community to find out which consultants to make use of for a chosen job.

- Each AI mannequin has some extent of fine-tuning, thus each skilled in an MoE is fine-tuned to carry out as supposed for an added layer of tuning conventional fashions couldn’t reap the benefits of.

MoE Gating Community

The gating community acts because the decision-maker or controller throughout the MoE mannequin. It evaluates incoming duties and determines which skilled is suited to deal with them. This choice is often primarily based on realized weights, that are adjusted over time by way of coaching, additional enhancing its potential to match duties with consultants. The gating community can make use of numerous methods, from probabilistic strategies the place gentle assignments are tasked to a number of consultants, to deterministic strategies that route every job to a single skilled.

MoE Consultants

Every skilled within the MoE mannequin represents a smaller neural community, machine studying mannequin, or LLM optimized for a selected subset of the issue area. For instance, in Mistral, completely different consultants may concentrate on understanding sure languages, dialects, and even forms of queries. The specialization ensures every skilled is proficient in its area of interest, which, when mixed with the contributions of different consultants, will result in superior efficiency throughout a wide selection of duties.

MoE Loss Operate

Though not thought-about a primary part of the MoE structure, the loss perform performs a pivotal position sooner or later efficiency of the mannequin, because it’s designed to optimize each the person consultants and the gating community.

It sometimes combines the losses computed for every skilled that are weighted by the likelihood or significance assigned to them by the gating community. This helps to fine-tune the consultants for his or her particular duties whereas adjusting the gating community to enhance routing accuracy.

The MoE Course of Begin to End

Now let’s sum up your entire course of, including extra particulars.

Here is a summarized clarification of how the routing course of works from begin to end:

- Enter Processing: Preliminary dealing with of incoming knowledge. Primarily our Immediate within the case of LLMs.

- Characteristic Extraction: Reworking uncooked enter for evaluation.

- Gating Community Analysis: Assessing skilled suitability by way of possibilities or weights.

- Weighted Routing: Allocating enter primarily based on computed weights. Right here, the method of selecting probably the most appropriate LLM is accomplished. In some instances, a number of LLMs are chosen to reply a single enter.

- Activity Execution: Processing allotted enter by every skilled.

- Integration of Skilled Outputs: Combining particular person skilled outcomes for last output.

- Suggestions and Adaptation: Utilizing efficiency suggestions to enhance fashions.

- Iterative Optimization: Steady refinement of routing and mannequin parameters.

Standard Fashions that Make the most of an MoE Structure

- OpenAI’s GPT-4 and GPT-4o: GPT-4 and GPT4o energy the premium model of ChatGPT. These multi-modal fashions make the most of MoE to have the ability to ingest completely different supply mediums like pictures, textual content, and voice. It’s rumored and barely confirmed that GPT-4 has 8 consultants every with 220 billion paramters totalling your entire mannequin to over 1.7 trillion parameters.

- Mistral AI’s Mixtral 8x7b: Mistral AI delivers very sturdy AI fashions open supply and have mentioned their Mixtral mannequin is a sMoE mannequin or sparse Combination of Consultants mannequin delivered in a small package deal. Mixtral 8x7b has a complete of 46.7 billion parameters however solely makes use of 12.9B parameters per token, thus processing inputs and outputs at that price. Their MoE mannequin persistently outperforms Llama2 (70B) and GPT-3.5 (175B) whereas costing much less to run.

The Advantages of MoE and Why It is the Most popular Structure

In the end, the principle purpose of MoE structure is to current a paradigm shift in how advanced machine studying duties are approached. It presents distinctive advantages and demonstrates its superiority over conventional fashions in a number of methods.

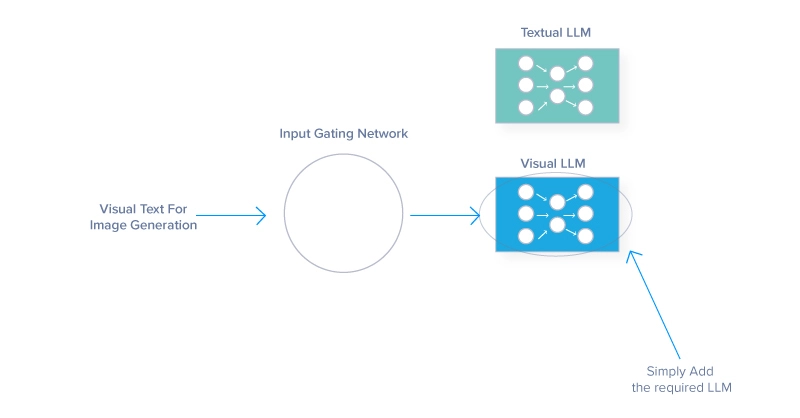

- Enhanced Mannequin Scalability

- Every skilled is accountable for part of a job, subsequently scaling by including consultants will not incur a proportional enhance in computational calls for.

- This modular method can deal with bigger and extra numerous datasets and facilitates parallel processing, dashing up operations. For example, including a picture recognition mannequin to a text-based mannequin can combine a further LLM skilled for deciphering footage whereas nonetheless having the ability to output textual content. Or

- Versatility permits the mannequin to broaden its capabilities throughout several types of knowledge inputs.

- Improved Effectivity and Flexibility

- MoE fashions are extraordinarily environment friendly, selectively participating solely needed consultants for particular inputs, not like typical architectures that use all their parameters regardless.

- The structure reduces the computational load per inference, permitting the mannequin to adapt to various knowledge sorts and specialised duties.

- Specialization and Accuracy:

- Every skilled in an MoE system will be finely tuned to particular elements of the general downside, resulting in higher experience and accuracy in these areas

- Specialization like that is useful in fields like medical imaging or monetary forecasting, the place precision is essential

- MoE can generate higher outcomes from slim domains as a consequence of its nuanced understanding, detailed data, and the flexibility to outperform generalist fashions on specialised duties.

The Downsides of The MoE Structure

Whereas MoE structure presents important benefits, it additionally comes with challenges that may affect its adoption and effectiveness.

- Mannequin Complexity: Managing a number of neural community consultants and a gating community for guiding visitors makes MoE growth and operational prices difficult

- Coaching Stability: Interplay between the gating community and the consultants introduces unpredictable dynamics that hinder attaining uniform studying charges and require in depth hyperparameter tuning.

- Imbalance: Leaving consultants idle is poor optimization for the MoE mannequin, spending assets on consultants that aren’t in use or counting on sure consultants an excessive amount of. Balancing the workload distribution and tuning an efficient gate is essential for a high-performing MoE AI.

It ought to be famous that the above drawbacks normally diminish over time as MoE structure is improved.

The Future Formed by Specialization

Reflecting on the MoE method and its human parallel, we see that simply as specialised groups obtain greater than a generalized workforce, specialised fashions outperform their monolithic counterparts in AI fashions. Prioritizing variety and experience turns the complexity of large-scale issues into manageable segments that consultants can sort out successfully.

As we glance to the long run, take into account the broader implications of specialised methods in advancing different applied sciences. The ideas of MoE may affect developments in sectors like healthcare, finance, and autonomous methods, selling extra environment friendly and correct options.

The journey of MoE is simply starting, and its continued evolution guarantees to drive additional innovation in AI and past. As high-performance {hardware} continues to advance, this combination of skilled AIs can reside in our smartphones, able to delivering even smarter experiences. However first, somebody’s going to wish to coach one.

Kevin Vu manages Exxact Corp weblog and works with lots of its gifted authors who write about completely different elements of Deep Studying.