Picture by Writer

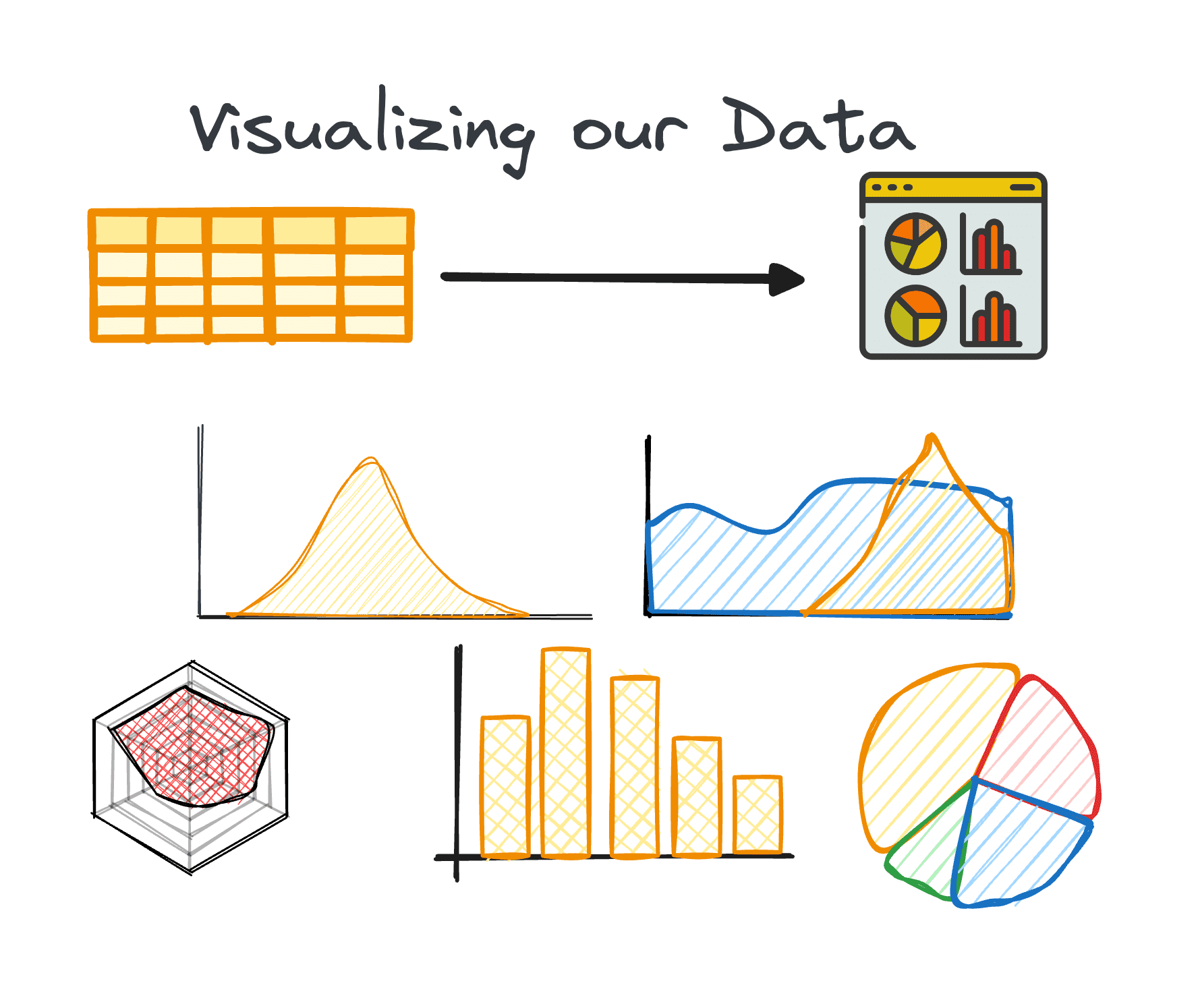

Exploratory Knowledge Evaluation (or EDA) stands as a core part throughout the Knowledge Evaluation Course of, emphasizing an intensive investigation right into a dataset’s internal particulars and traits.

Its major purpose is to uncover underlying patterns, grasp the dataset’s construction, and establish any potential anomalies or relationships between variables.

By performing EDA, information professionals verify the standard of the information. Subsequently, it ensures that additional evaluation is predicated on correct and insightful info, thereby lowering the probability of errors in subsequent phases.

So let’s attempt to perceive collectively what are the fundamental steps to carry out a superb EDA for our subsequent Knowledge Science venture.

I’m fairly certain you might have already heard the phrase:

Rubbish in, Rubbish out

Enter information high quality is at all times a very powerful issue for any profitable information venture.

Sadly, most information is grime at first. By way of the method of Exploratory Knowledge Evaluation, a dataset that’s almost usable could be remodeled into one that’s totally usable.

It is vital to make clear that it isn’t a magic resolution for purifying any dataset. Nonetheless, quite a few EDA methods are efficient at addressing a number of typical points encountered inside datasets.

So… let’s be taught essentially the most fundamental steps based on Ayodele Oluleye in his e book Exploratory Knowledge Evaluation with Python Cookbook.

Step 1: Knowledge Assortment

The preliminary step in any information venture is having the information itself. This primary step is the place information is gathered from varied sources for subsequent evaluation.

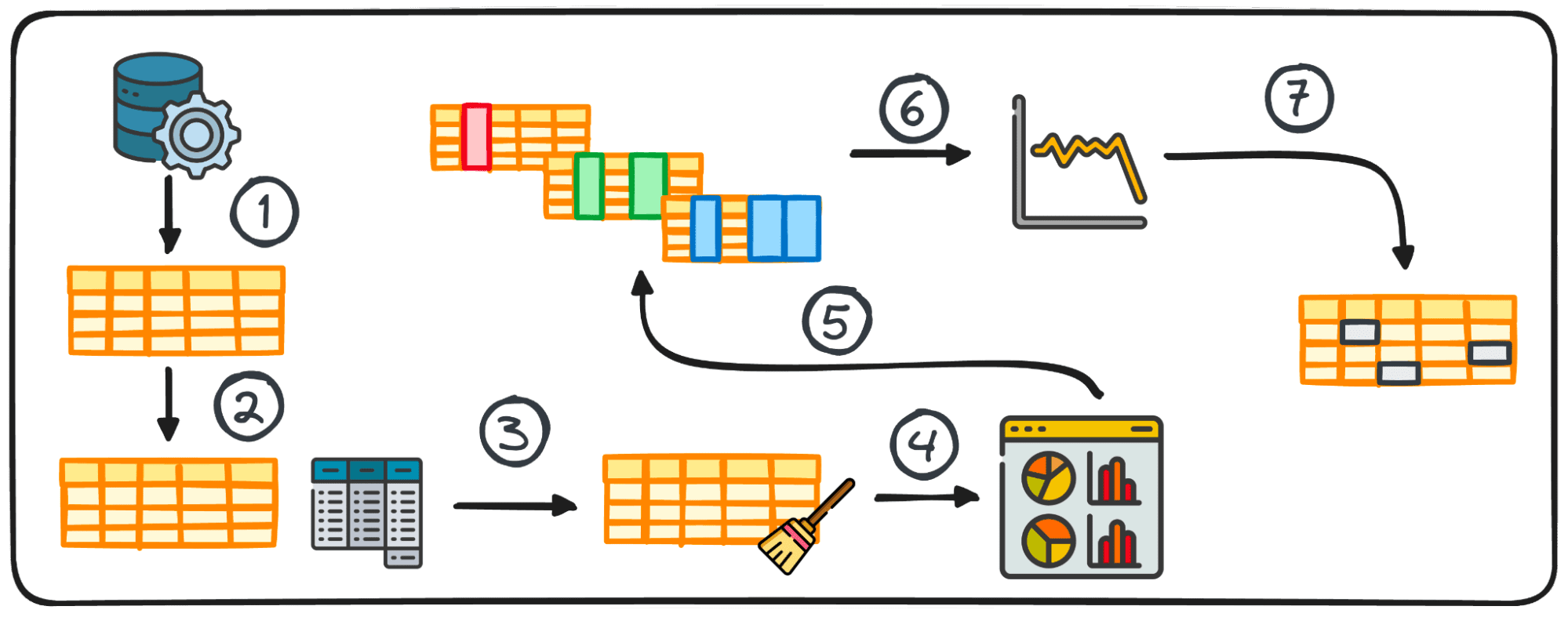

2. Abstract Statistics

In information evaluation, dealing with tabular information is sort of frequent. In the course of the evaluation of such information, it is usually mandatory to realize fast insights into the information’s patterns and distribution.

These preliminary insights function a base for additional exploration and in-depth evaluation and are referred to as abstract statistics.

They provide a concise overview of the dataset’s distribution and patterns, encapsulated by metrics akin to imply, median, mode, variance, normal deviation, vary, percentiles, and quartiles.

Picture by Writer

3. Getting ready Knowledge for EDA

Earlier than beginning our exploration, information normally must be ready for additional evaluation. Knowledge preparation includes remodeling, aggregating, or cleansing information utilizing Python’s pandas library to swimsuit the wants of your evaluation.

This step is tailor-made to the information’s construction and might embody grouping, appending, merging, sorting, categorizing, and coping with duplicates.

In Python, engaging in this activity is facilitated by the pandas library by its varied modules.

The preparation course of for tabular information would not adhere to a common methodology; as a substitute, it is formed by the particular traits of our information, together with its rows, columns, information varieties, and the values it comprises.

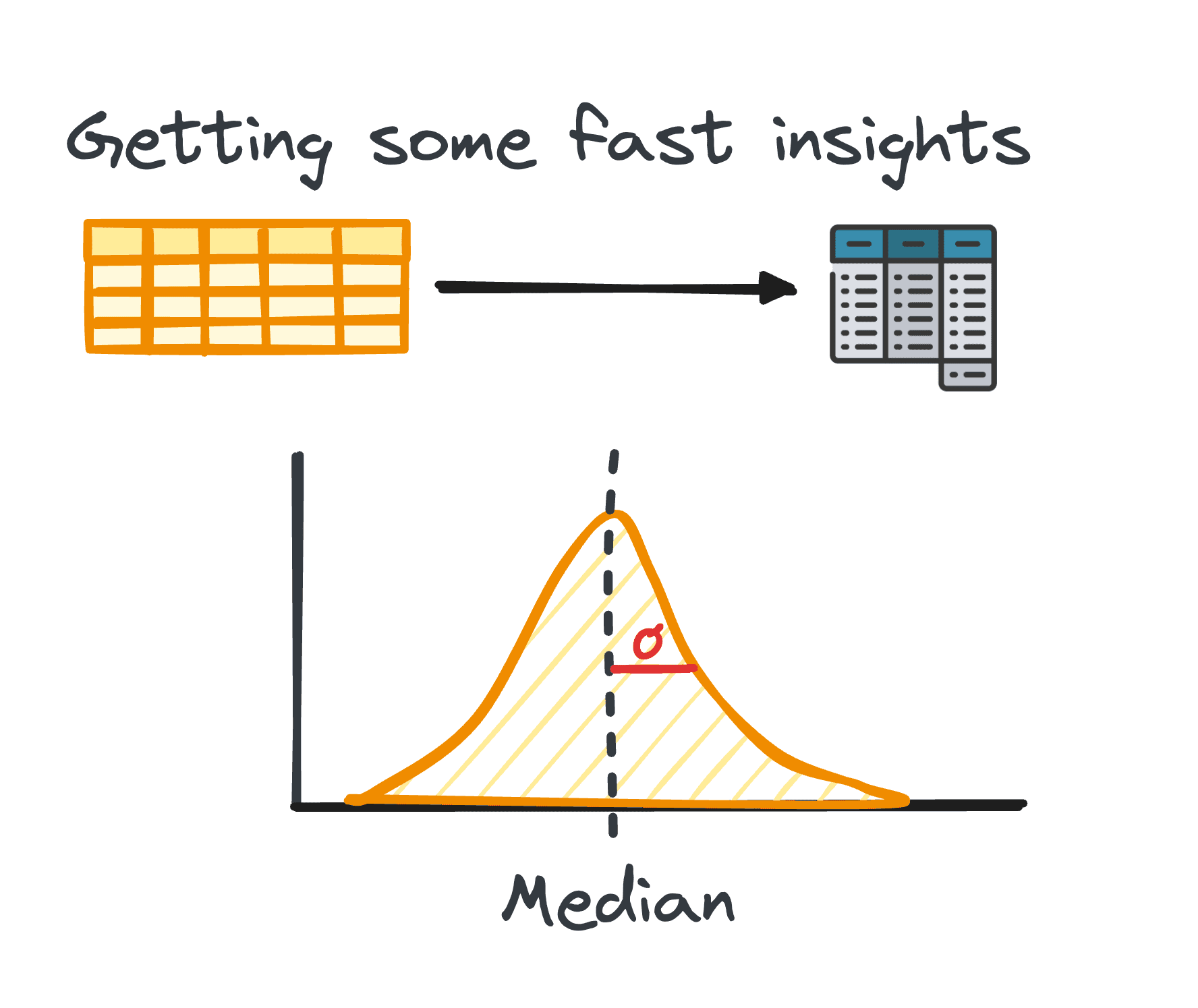

4. Visualizing Knowledge

Visualization is a core part of EDA, making complicated relationships and developments throughout the dataset simply understandable.

Utilizing the appropriate charts might help us establish developments inside a giant dataset and discover hidden patterns or outliers. Python gives completely different libraries for information visualization, together with Matplotlib or Seaborn amongst others.

Picture by Writer

5. Performing Variable Evaluation:

Variable evaluation could be both univariate, bivariate, or multivariate. Every of them offers insights into the distribution and correlations between the dataset’s variables. Methods differ relying on the variety of variables analyzed:

Univariate

The principle focus in univariate evaluation is on inspecting every variable inside our dataset by itself. Throughout this evaluation, we are able to uncover insights such because the median, mode, most, vary, and outliers.

Any such evaluation is relevant to each categorical and numerical variables.

Bivariate

Bivariate evaluation goals to disclose insights between two chosen variables and focuses on understanding the distribution and relationship between these two variables.

As we analyze two variables on the similar time, one of these evaluation could be trickier. It might embody three completely different pairs of variables: numerical-numerical, numerical-categorical, and categorical-categorical.

Multivariate

A frequent problem with massive datasets is the simultaneous evaluation of a number of variables. Though univariate and bivariate evaluation strategies provide invaluable insights, that is normally not sufficient for analyzing datasets containing a number of variables (normally greater than 5).

This concern of managing high-dimensional information, normally known as the curse of dimensionality, is well-documented. Having a lot of variables could be advantageous because it permits the extraction of extra insights. On the similar time, this benefit could be in opposition to us as a result of restricted variety of methods out there for analyzing or visualizing a number of variables concurrently.

6. Analyzing Time Sequence Knowledge

This step focuses on the examination of knowledge factors collected over common time intervals. Time collection information applies to information that change over time. This principally means our dataset consists of a bunch of knowledge factors which can be recorded over common time intervals.

Once we analyze time collection information, we are able to usually uncover patterns or developments that repeat over time and current a temporal seasonality. Key elements of time collection information embody developments, seasonal differences, cyclical variations, and irregular variations or noise.

7. Coping with Outliers and Lacking Values

Outliers and lacking values can skew evaluation outcomes if not correctly addressed. Because of this we should always at all times take into account a single part to take care of them.

Figuring out, eradicating, or changing these information factors is essential for sustaining the integrity of the dataset’s evaluation. Subsequently, it’s extremely vital to handle them earlier than begin analyzing our information.

- Outliers are information factors that current a big deviation from the remainder. They normally current unusually excessive or low values.

- Lacking values are the absence of knowledge factors akin to a particular variable or remark.

A vital preliminary step in coping with lacking values and outliers is to grasp why they’re current within the dataset. This understanding usually guides the choice of essentially the most appropriate methodology for addressing them. Further components to think about are the traits of the information and the particular evaluation that shall be carried out.

EDA not solely enhances the dataset’s readability but additionally allows information professionals to navigate the curse of dimensionality by offering methods for managing datasets with quite a few variables.

By way of these meticulous steps, EDA with Python equips analysts with the instruments essential to extract significant insights from information, laying a stable basis for all subsequent information evaluation endeavors.

Josep Ferrer is an analytics engineer from Barcelona. He graduated in physics engineering and is presently working within the Knowledge Science area utilized to human mobility. He’s a part-time content material creator centered on information science and know-how. You possibly can contact him on LinkedIn, Twitter or Medium.