Introduction

Massive Language Fashions (LLMs) have gained lots of consideration not too long ago and achieved spectacular leads to numerous NLP duties. Constructing on this momentum, it’s essential to dive deeper into particular purposes of LLMs, similar to their utilization within the job of few-shot Named Entity Recognition (NER). This leads us to the main target of our ongoing exploration — a comparative evaluation of LLMs’ efficiency in few-shot NER. We are attempting to know:

- Do LLMs outperform supervised strategies in few-shot NER?

- Which LLMs are presently probably the most performant?

- How else can LLMs be utilized in Few-Shot NER?

Try our earlier weblog publish on what NER is and present state-of-the-art (SOTA) few-shot NER strategies.

On this weblog publish, we proceed our dialogue to seek out out whether or not LLMs reign supreme in few-shot NER. To do that, we’ll be a number of not too long ago launched papers that handle every of the questions above. Latest analysis signifies that when there’s a wealth of labeled examples for a sure entity kind, LLMs nonetheless lag behind supervised strategies for that exact entity kind. But, for many entity sorts there’s a scarcity of annotated information. Novel entity sorts are frequently arising, and creating annotated examples is a expensive and prolonged course of, significantly in high-value fields like biomedicine the place specialised information is important for annotation. As such, few-shot NER stays a related and vital job.

How do LLMs stack up towards supervised strategies?

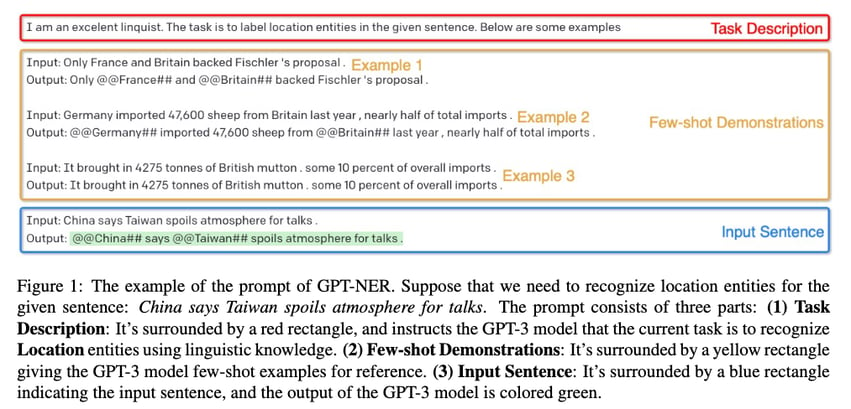

To seek out out, let’s check out GPT-NER by Shuhe Wang et al. which was revealed in April 2023. The authors proposed to remodel the NER sequence labeling job (assigning lessons to tokens) right into a era job (producing textual content), which ought to make it simpler to cope with for LLMs, and GPT fashions. The determine beneath is an instance of how the prompts are constructed to acquire labels when the mannequin is given an instruction together with a number of examples.

GPT-NER Immediate development instance (Shuhe Wang et al.)

To rework the duty into one thing extra simply digestible for LLMs, the authors add particular symbols marking the areas of the named entities: for instance, France turns into @@France##. After seeing a number of examples of this, the mannequin then has to mark the entities in its solutions in the identical method. On this setting, just one kind of entity (e.g. location or particular person) is detected utilizing one immediate. If a number of entity sorts must be detected, the mannequin needs to be queried a number of instances.

The authors used GPT-3 and performed experiments over 4 completely different NER datasets. Unsurprisingly, supervised fashions proceed to outperform GPT-NER in totally supervised baselines, as LLMs are often seen as generalists. LLMs additionally undergo from hallucination, a phenomenon the place LLMs generate textual content that isn’t actual, or is inaccurate or nonsensical. The authors claimed that, of their case, the mannequin tended to over-confidently mark non-entity phrases as named entities. To counteract the difficulty of hallucination, the authors suggest a self-verification technique: when the mannequin says one thing is an entity, it’s then requested a sure/no query to confirm whether or not the extracted entity belongs to the required kind. Utilizing this self-verification technique additional improves the mannequin’s efficiency however doesn’t but bridge the hole in efficiency when in comparison with supervised strategies.

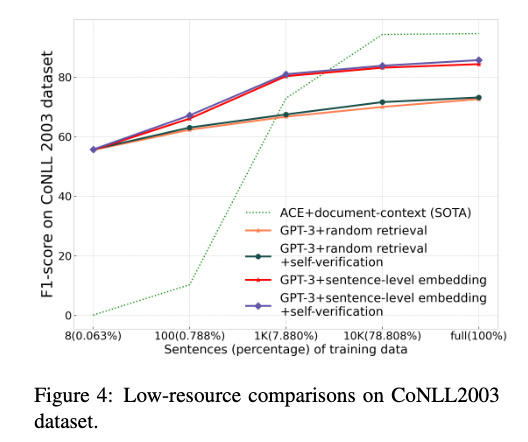

An enchanting level from this paper is that GPT-NER displays spectacular proficiency in low-resource and few-shot NER setups. The determine beneath exhibits the efficiency of the supervised mannequin is much beneath GPT-3 when the coaching set could be very small.

GPT-NER vs supervised strategies in a low-resource setting on a dataset (Shuhe Wang et al.)

That appears to be very promising. Does this imply the reply ends right here? By no means. Particulars within the paper reveal a number of issues concerning the GPT-NER methodology which may not appear apparent at first look.

A whole lot of particulars within the paper deal with choose the few examples from the coaching dataset to produce inside the LLM immediate (the authors name these “few-shot demonstration examples”). The principle distinction between this and a real few-shot setting is that the latter solely has a number of coaching examples accessible whereas the previous has much more, i.e. we aren’t spoiled with alternative in a real few-shot setting. As well as, the perfect demonstration instance retrieval methodology makes use of a fine-tuned NER mannequin. All this implies that an apple-to-apple comparability must be made however was not carried out on this paper. A benchmark must be created the place the perfect few-shot methodology and pure-LLM strategies are in contrast utilizing the identical (few) coaching examples utilizing datasets like Few-NERD.

That being mentioned, it’s nonetheless fascinating that LLM-based strategies like GPT-NER can obtain virtually comparable efficiency towards SOTA NER strategies.

Which LLMs are greatest in Few-Shot NER?

Resulting from their reputation, OpenAI’s GPT collection fashions, such because the GPT-3 collection (davinci, text-davinci-001), have been the principle focus for preliminary research. In a paper titled “A Complete Functionality Evaluation of GPT-3 and GPT-3.5 Collection Fashions“ that was first revealed in March 2023, Ye et al. claimed that whereas GPT-3 and ChatGPT obtain the perfect efficiency over 6 completely different NER datasets among the many OpenAI GPT collection fashions within the zero-shot setting, efficiency varies within the few-shot setting (1-shot and 3-shot), i.e. there isn’t any clear winner.

How else can LLMs be utilized in Few-Shot NER (or associated duties)?

In earlier research, quite a lot of prompting strategies have been introduced. Nonetheless, Zhou et al. put forth a singular method the place they utilized the strategy of focused distilling. As a substitute of merely making use of an LLM as is to the NER job through prompting, they practice a smaller mannequin, referred to as a pupil, that goals to copy the capabilities of a generalist language mannequin on a selected job (on this case, named entity recognition).

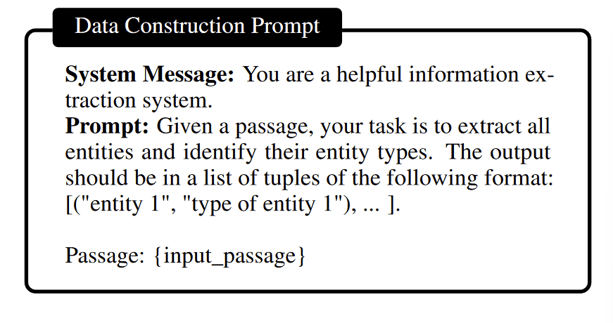

A pupil mannequin is created in two essential steps. First, they take samples of a giant textual content dataset and use ChatGPT to seek out named entities in these samples and determine their sorts. Then these routinely annotated information are used as directions to fine-tune a smaller, open-source LLM. The authors title this methodology “mission-focused instruction tuning”. This manner, the smaller mannequin learns to copy the capabilities of the stronger mannequin which has extra parameters. The brand new mannequin solely must carry out properly on a selected class of duties, so it might probably really outperform the mannequin it realized from.

Prompting an LLM to generate entity mentions and their sorts (Zhou et al.)

This system enabled Zhou et al. to considerably outperform ChatGPT and some different LLMs in NER.

As a substitute of few-shot NER, the authors targeted on open-domain NER, which is a sub-task of NER that works throughout all kinds of domains. This route of analysis has confirmed to be an attention-grabbing exploration of the purposes of GPT fashions and instruction tuning. The paper’s experiments present promising outcomes, indicating they might doubtlessly revolutionize the best way we method NER duties and enhance the programs’ effectivity and precision.

On the similar time, there have been efforts targeted on utilizing open-source LLMs, which supply extra transparency and choices for experimentation. For instance, Li et al. have not too long ago proposed to leverage the interior representations inside a big language mannequin (particularly, LLaMA-2) and supervised fine-tuning to create higher NER and textual content classification fashions. The authors declare to realize state-of-the-art outcomes on the CoNLL-2003 and OntoNotes datasets. Such extensions and modifications are solely doable with open-source fashions, and it’s a promising signal that they’ve been getting extra consideration and can also be prolonged to few-shot NER sooner or later.

All in all

Few-Shot NER utilizing LLMs remains to be a comparatively unexplored area. There are a number of tendencies and open-ended questions on this area. As an illustration, ChatGPT remains to be generally used, however given the emergence of different proprietary and open-source LLMs, this might shift sooner or later. The solutions to those questions won’t simply form the way forward for NER, but additionally have a substantial affect on the broader area of machine studying.

Check out one of many LLMs on the Clarifai platform as we speak. We even have a full weblog publish on Evaluate Prime LLMs with LLM Batteground. Can’t discover what you want? Seek the advice of our docs web page or ship us a message in our Group Discord channel.