Introduction

Databricks Lakehouse Monitoring lets you monitor all of your information pipelines – from information to options to ML fashions – with out further instruments and complexity. Constructed into Unity Catalog, you’ll be able to monitor high quality alongside governance and get deep perception into the efficiency of your information and AI property. Lakehouse Monitoring is totally serverless so that you by no means have to fret about infrastructure or tuning compute configuration.

Our single, unified method to monitoring makes it easy to trace high quality, diagnose errors, and discover options straight within the Databricks Intelligence Platform. Preserve studying to find the way you and your staff can get essentially the most out of Lakehouse Monitoring.

Why Lakehouse Monitoring?

Right here’s a situation: your information pipeline seems to be operating easily, solely to find that the standard of the information has silently degraded over time. It’s a typical downside amongst information engineers – every thing appears advantageous till somebody complains that the information is unusable.

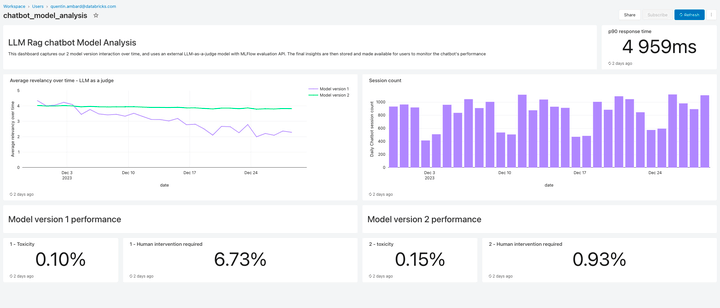

For these of you coaching ML fashions, monitoring manufacturing mannequin performances and evaluating totally different variations is an ongoing problem. Consequently, groups are confronted with fashions going stale in manufacturing and tasked with rolling them again.

The phantasm of useful pipelines that masks crumbling information high quality makes it difficult for information and AI groups to fulfill supply and high quality SLAs. Lakehouse Monitoring may also help you proactively uncover high quality points earlier than downstream processes are impacted. You possibly can keep forward of potential points, making certain that pipelines run easily, and machine studying fashions stay efficient over time. No extra weeks spent on debugging and rolling again modifications!

The way it works

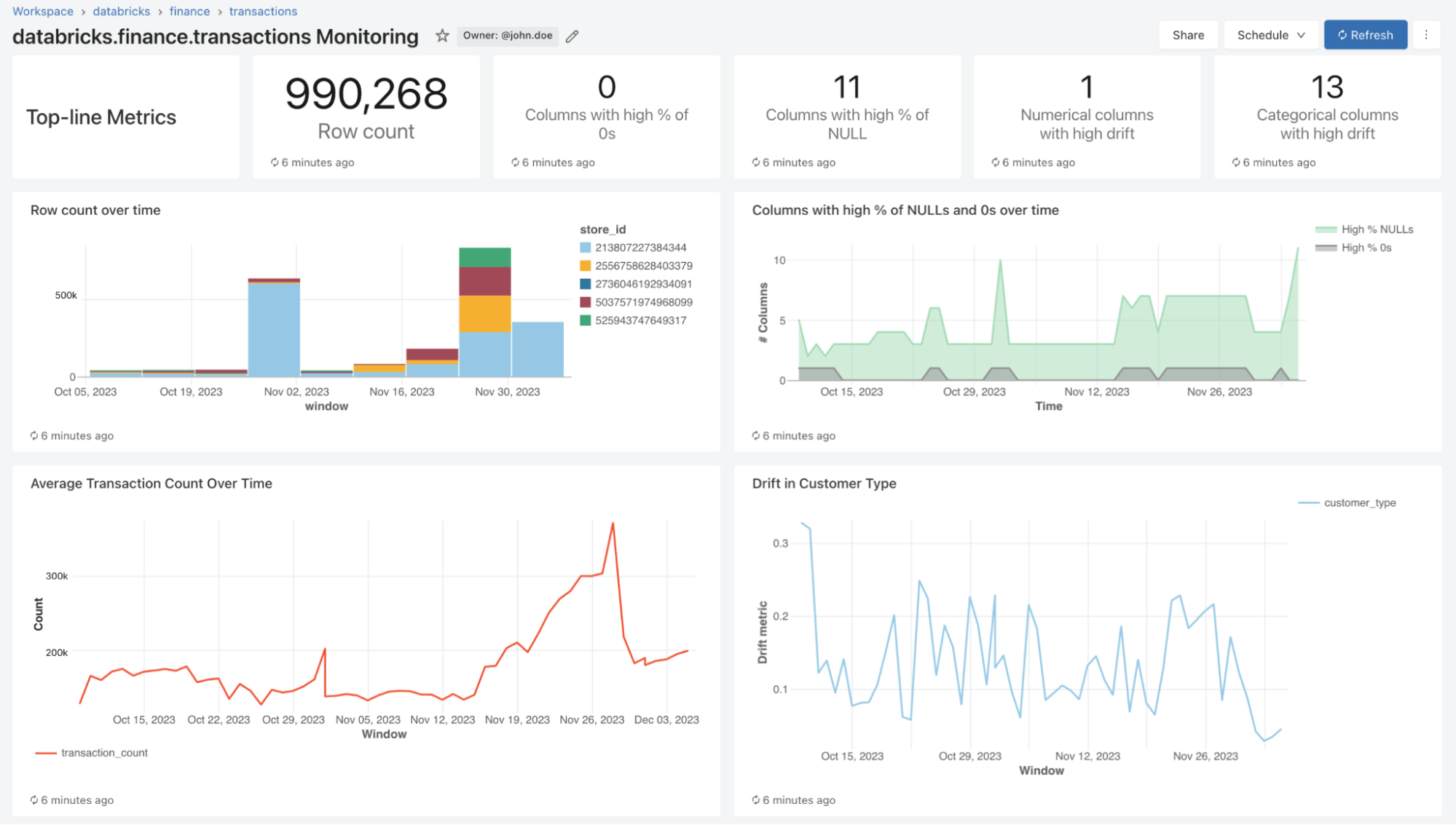

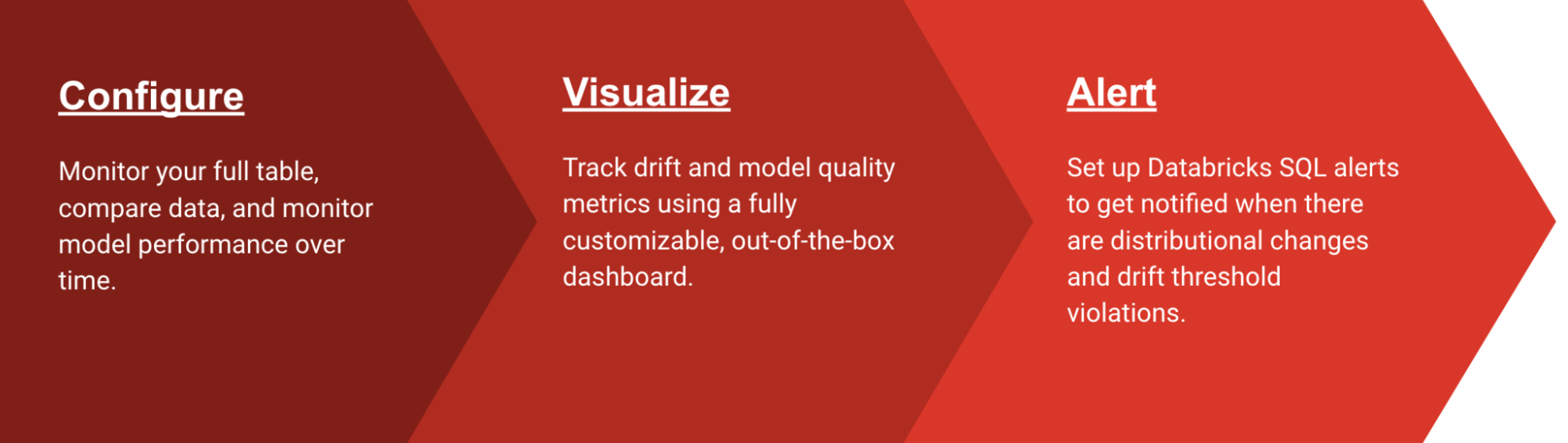

With Lakehouse Monitoring, you’ll be able to monitor the statistical properties and high quality of all of your tables in simply one-click. We mechanically generate a dashboard that visualizes information high quality for any Delta desk in Unity Catalog. Our product computes a wealthy set of metrics out of the field. As an example, should you’re monitoring an inference desk, we offer mannequin efficiency metrics, as an illustration, R-squared, accuracy, and so forth.. Alternatively, for these monitoring information engineering tables, we offer distributional metrics together with imply, min/max, and so forth.. Along with the built-in metrics, you can too configure customized (business-specific) metrics that you really want us to calculate. Lakehouse Monitoring refreshes metrics and retains the dashboard up-to-date in line with your specified schedule. All metrics are saved in Delta tables to allow ad-hoc analyses, customized visualizations, and alerts.

Configuring Monitoring

You possibly can arrange monitoring on any desk you personal utilizing the Databricks UI (AWS | Azure) or API (AWS | Azure). Choose the kind of monitoring profile you need in your information pipelines or fashions:

- Snapshot Profile: If you wish to monitor the complete desk over time or evaluate present information to earlier variations or a identified baseline, a Snapshot Profile will work finest. We’ll then calculate metrics over all the information within the desk and replace metrics each time the monitor is refreshed.

- Time Sequence Profile: In case your desk incorporates occasion timestamps and also you need to evaluate information distributions over home windows of time (hourly, each day, weekly, …), then a Time Sequence profile will work finest. We advocate that you just activate Change Knowledge Feed (AWS | Azure) so you may get incremental processing each time the monitor is refreshed. Be aware: you have to a timestamp column with a view to configure this profile.

- Inference Log Profile: If you wish to evaluate mannequin efficiency over time or monitor how mannequin inputs and predictions are shifting over time, an inference profile will work finest. You will have an inference desk (AWS | Azure) which incorporates inputs and outputs from a ML classification or regression mannequin. You can even optionally embody floor fact labels to calculate drift and different metadata reminiscent of demographic data to get equity and bias metrics.

You possibly can select how usually you need our monitoring service to run. Many shoppers select a each day or hourly schedule to make sure the freshness and relevance of their information. If you would like monitoring to mechanically run on the finish of information pipeline execution, you can too name the API to refresh monitoring straight in your Workflow.

To additional customise monitoring, you’ll be able to set slicing expressions to watch function subsets of the desk along with the desk as an entire. You possibly can slice any particular column, e.g. ethnicity, gender, to generate equity and bias metrics. You can even outline customized metrics primarily based on columns in your main desk or on prime of out-of-the-box metrics. See how one can use customized metrics (AWS | Azure) for extra particulars.

Visualize High quality

As a part of a refresh, we’ll scan your tables and fashions to generate metrics that monitor high quality over time. We calculate two varieties of metrics that we retailer in Delta tables for you:

- Profile Metrics: They supply abstract statistics of your information. For instance, you’ll be able to monitor the variety of nulls and zeros in your desk or accuracy metrics to your mannequin. See the profile metrics desk schema (AWS | Azure) for extra data.

- Drift Metrics: They supply statistical drift metrics that mean you can evaluate in opposition to your baseline tables. See the drift metrics desk schema (AWS | Azure) for extra data.

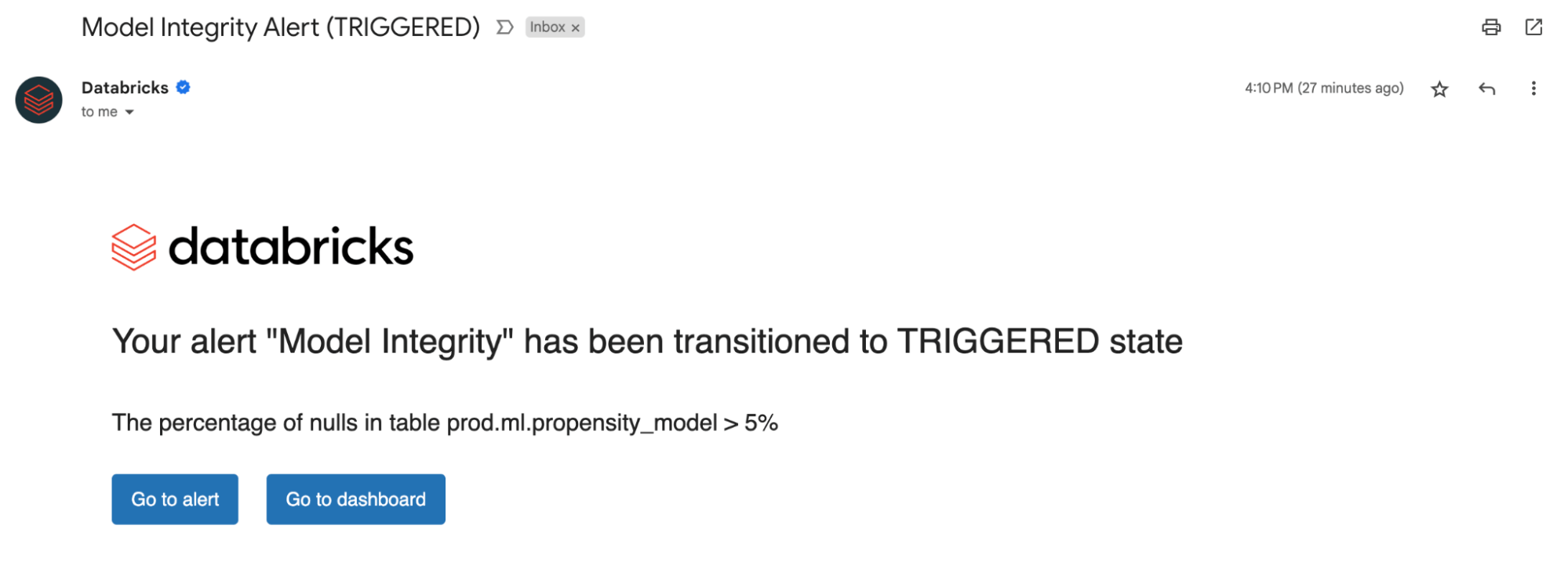

To visualise all these metrics, Lakehouse Monitoring gives an out-of-the-box dashboard that’s totally customizable. You can even create Databricks SQL alerts (AWS | Azure) to get notified on threshold violations, modifications to information distribution, and drift out of your baseline desk.

Organising Alerts

Whether or not you are monitoring information tables or fashions, organising alerts on our computed metrics notifies you of potential errors and helps forestall downstream dangers.

You will get alerted if the p.c of nulls and zeros exceed a sure threshold or bear modifications over time. In case you are monitoring fashions, you may get alerted if mannequin efficiency metrics like toxicity or drift fall beneath sure high quality thresholds.

Now, with insights derived from our alerts, you’ll be able to establish whether or not a mannequin wants retraining or if there are potential points along with your supply information. After you’ve addressed points, you’ll be able to manually name the refresh API to get the newest metrics to your up to date pipeline. Lakehouse Monitoring helps you proactively take actions to keep up the general well being and reliability of your information and fashions.

Monitor LLM High quality

Lakehouse Monitoring affords a completely managed high quality answer for Retrieval Augmented Technology (RAG) functions. It scans your utility outputs for poisonous or in any other case unsafe content material. You possibly can shortly diagnose errors associated to e.g. stale information pipelines or surprising mannequin habits. Lakehouse Monitoring totally manages monitoring pipelines, releasing builders to concentrate on their functions.

What’s coming subsequent?

We’re excited for the way forward for Lakehouse Monitoring and searching ahead to help:

- Knowledge classification/ PII Detection – Join our Non-public Preview right here!

- Expectations to mechanically implement information high quality guidelines and orchestrate your pipelines

- A holistic view of your displays to summarize the standard and well being throughout your tables

To be taught extra about Lakehouse monitoring and get began at the moment, go to our product documentation (AWS | Azure). Moreover, atone for the current bulletins about creating top quality RAG functions, and be part of us for our GenAI webinar.