Spark on AWS Lambda (SoAL) is a framework that runs Apache Spark workloads on AWS Lambda. It’s designed for each batch and event-based workloads, dealing with knowledge payload sizes from 10 KB to 400 MB. This framework is right for batch analytics workloads from Amazon Easy Storage Service (Amazon S3) and event-based streaming from Amazon Managed Streaming for Apache Kafka (Amazon MSK) and Amazon Kinesis. The framework seamlessly integrates knowledge with platforms like Apache Iceberg, Apache Delta Lake, Apache HUDI, Amazon Redshift, and Snowflake, providing a low-cost and scalable knowledge processing resolution. SoAL gives a framework that allows you to run data-processing engines like Apache Spark and benefit from the advantages of serverless structure, like auto scaling and compute for analytics workloads.

This publish highlights the SoAL structure, gives infrastructure as code (IaC), presents step-by-step directions for organising the SoAL framework in your AWS account, and descriptions SoAL architectural patterns for enterprises.

Answer overview

Apache Spark presents cluster mode and native mode deployments, with the previous incurring latency as a result of cluster initialization and warmup. Though Apache Spark’s cluster-based engines are generally used for knowledge processing, particularly with ACID frameworks, they exhibit excessive useful resource overhead and slower efficiency for payloads beneath 50 MB in comparison with the extra environment friendly Pandas framework for smaller datasets. When in comparison with Apache Spark cluster mode, native mode gives quicker initialization and higher efficiency for small analytics workloads. The Apache Spark native mode on the SoAL framework is optimized for small analytics workloads, and cluster mode is optimized for bigger analytics workloads, making it a flexible framework for enterprises.

We offer an AWS Serverless Utility Mannequin (AWS SAM) template, obtainable within the GitHub repo, to deploy the SoAL framework in an AWS account. The AWS SAM template builds the Docker picture, pushes it to the Amazon Elastic Container Registry (Amazon ECR) repository, after which creates the Lambda operate. The AWS SAM template expedites the setup and adoption of the SoAL framework for AWS clients.

SoAL structure

The SoAL framework gives native mode and containerized Apache Spark operating on Lambda. Within the SoAL framework, Lambda runs in a Docker container with Apache Spark and AWS dependencies put in. On invocation, the SoAL framework’s Lambda handler fetches the PySpark script from an S3 folder and submits the Spark job on Lambda. The logs for the Spark jobs are recorded in Amazon CloudWatch.

For each streaming and batch duties, the Lambda occasion is distributed to the PySpark script as a named argument. Using a container-based picture cache together with the nice and cozy occasion options of Lambda, it was discovered that the general JVM warmup time lowered from approx. 70 seconds to beneath 30 seconds. It was noticed that the framework performs effectively with batch payloads as much as 400 MB and streaming knowledge from Amazon MSK and Kinesis. The per-session prices for any given analytics workload is dependent upon the variety of requests, the run length, and the reminiscence configured for the Lambda features.

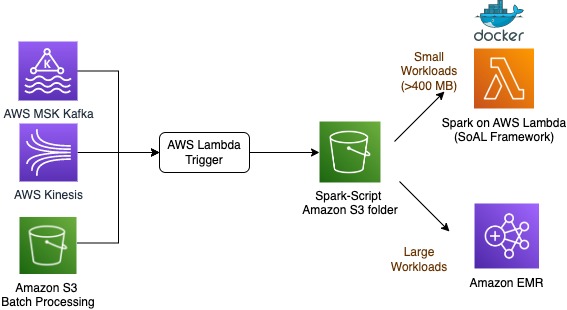

The next diagram illustrates the SoAL structure.

Enterprise structure

The PySpark script is developed in customary Spark and is suitable with the SoAL framework, Amazon EMR, Amazon EMR Serverless, and AWS Glue. If wanted, you should use the PySpark scripts in cluster mode on Amazon EMR, EMR Serverless, and AWS Glue. For analytics workloads with a measurement between just a few KBs and 400 MB, you should use the SoAL framework on Lambda and in bigger analytics workload eventualities over 400 MB, and run the identical PySpark script on AWS cluster-based instruments like Amazon EMR, EMR Serverless, and AWS Glue. The extensible script and structure make SoAL a scalable framework for analytics workloads for enterprises. The next diagram illustrates this structure.

Conditions

To implement this resolution, you want an AWS Identification and Entry Administration (IAM) position with permission to AWS CloudFormation, Amazon ECR, Lambda, and AWS CodeBuild.

Arrange the answer

To arrange the answer in an AWS account, full the next steps:

- Clone the GitHub repository to native storage and alter the listing throughout the cloned folder to the CloudFormation folder:

- Run the AWS SAM template

sam-imagebuilder.yamlutilizing the next command with the stack title and framework of your alternative. On this instance, the framework is Apache HUDI:

The command deploys a CloudFormation stack referred to as spark-on-lambda-image-builder. The command runs a CodeBuild undertaking that builds and pushes the Docker picture with the most recent tag to Amazon ECR. The command has a parameter referred to as ParameterValue for every open-source framework (Apache Delta, Apache HUDI, and Apache Iceberg).

- After the stack has been efficiently deployed, copy the ECR repository URI (spark-on-lambda-image-builder) that’s displayed within the output of the stack.

- Run the AWS SAM Lambda package deal with the required Area and ECR repository:

This command creates the Lambda operate with the container picture from the ECR repository. An output file packaged-template.yaml is created within the native listing.

- Optionally, to publish the AWS SAM software to the AWS Serverless Utility Repository, run the next command. This enables AWS SAM template sharing with the GUI interface utilizing AWS Serverless Utility Repository and different builders to make use of fast deployments sooner or later.

After you run this command, a Lambda operate is created utilizing the SoAL framework runtime.

- To check it, use PySpark scripts from the spark-scripts folder. Place the pattern script and accomodations.csv dataset in an S3 folder and supply the placement through the Lambda surroundings variables

SCRIPT_BUCKETandSCRIPT_LOCATION.

After Lambda is invoked, it uploads the PySpark script from the S3 folder to a container native storage and runs it on the SoAL framework container utilizing SPARK-SUBMIT. The Lambda occasion can also be handed to the PySpark script.

Clear up

Deploying an AWS SAM template incurs prices. Delete the Docker picture from Amazon ECR, delete the Lambda operate, and take away all of the recordsdata or scripts from the S3 location. You may also use the next command to delete the stack:

Conclusion

The SoAL framework allows you to run Apache Spark serverless duties on AWS Lambda effectively and cost-effectively. Past price financial savings, it ensures swift processing instances for small to medium recordsdata. As a holistic enterprise imaginative and prescient, SoAL seamlessly bridges the hole between massive and small knowledge processing, utilizing the ability of the Apache Spark runtime throughout each Lambda and different cluster-based AWS sources.

Observe the steps on this publish to make use of the SoAL framework in your AWS account, and go away a remark when you have any questions.

Concerning the authors

John Cherian is Senior Options Architect(SA) at Amazon Net Providers helps clients with technique and structure for constructing options on AWS.

John Cherian is Senior Options Architect(SA) at Amazon Net Providers helps clients with technique and structure for constructing options on AWS.

Emerson Antony is Senior Cloud Architect at Amazon Net Providers helps clients with implementing AWS options.

Emerson Antony is Senior Cloud Architect at Amazon Net Providers helps clients with implementing AWS options.

Kiran Anand is Principal AWS Knowledge Lab Architect at Amazon Net Providers helps clients with Large knowledge & Analytics structure.

Kiran Anand is Principal AWS Knowledge Lab Architect at Amazon Net Providers helps clients with Large knowledge & Analytics structure.